Why Woebot wants to create a better mental health app

The startup is building out a small engineering office in Dublin.

FOR ALISON DARCY, the field of psychiatry has been slow to adopt technologies despite there still being significant problems with access to services that tech could address.

The Irishwoman, who has held academic roles in UCD and DIT, moved to the US over 10 years ago to study a post-doc in psychiatry at Stanford University. She now heads up mental health tech startup Woebot Labs, based in California.

Before venturing into her own business, Darcy was immersed in academic research and at Stanford, collaborated with Andrew Ng, a heavyweight in the field of artificial intelligence.

Ng co-founded Google Brain, the tech giant’s AI research team, co-founded online education company Coursera, and also led AI efforts at Chinese corporation Baidu.

One of the driving forces behind her research was finding a way to present mental health therapies based on clinical studies and best practice through an app.

Darcy believes users need to be wary of any mental health tech apps that make big claims about what they do.

“There’s a really troubling number of apps that don’t have any privacy policy explicitly stated, don’t have any emphasis on demonstrating outcomes or showing that there’s any efficacy,” she said.

Alison Darcy

Alison Darcy

Darcy wanted to create a higher standard but despite her interest in research, she said she knew that she would have to leave academia if she wanted to build something that would be “really dramatic and impactful”.

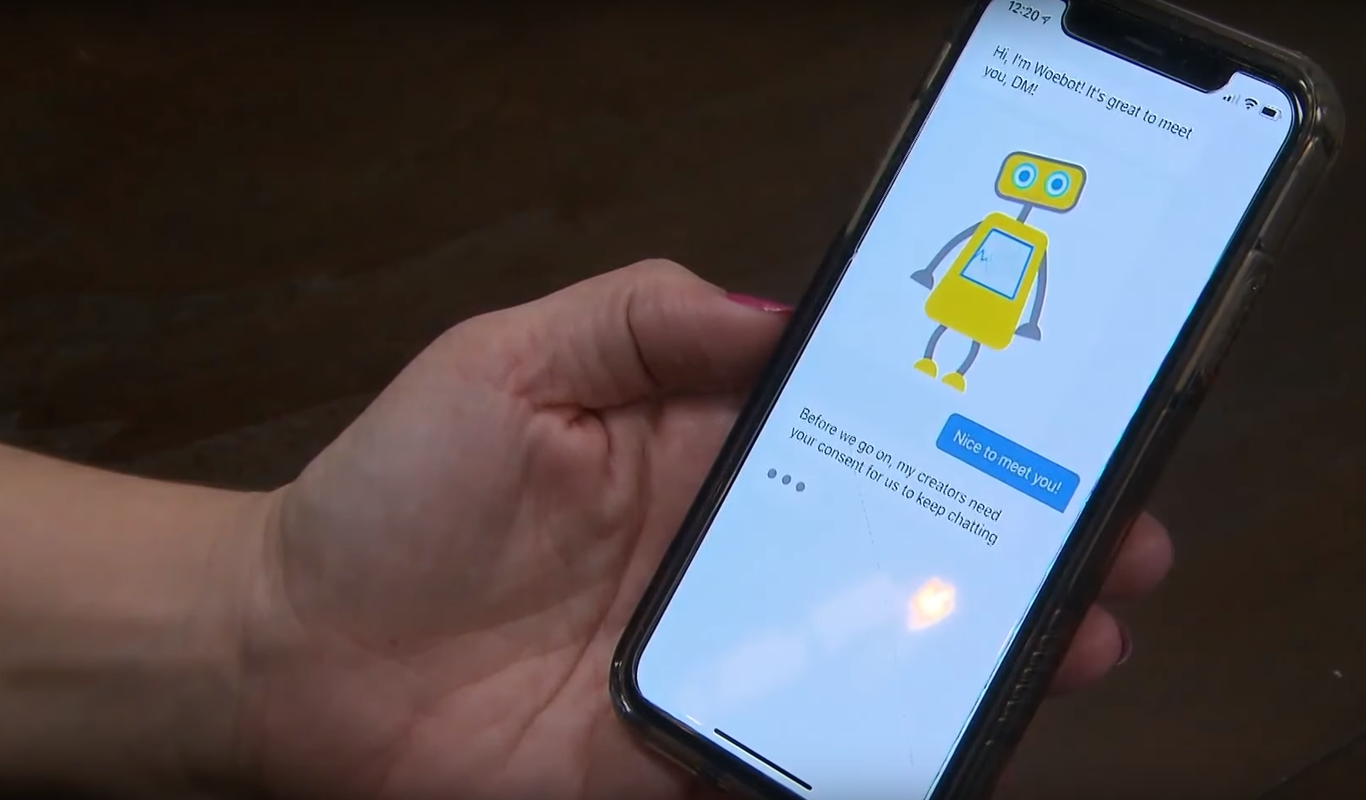

That drive ultimately led to Woebot, an app that engages daily with a user to check in on their moods and provide exercises based on cognitive behavioural therapy (CBT).

Barriers to access

Woebot’s app is backed up by clinical research carried out at Stanford, which Darcy said can help reduce barriers to mental health treatments.

“It’s a very popular idea in my field that if you were going through a difficult time, you should talk to someone,” she said.

“The actual reality on the ground that I was seeing in patients, is that most people will never get to that point where they’re actually able to see somebody.”

On first glance, Woebot looks like a chatbot seen in various apps and websites but Darcy said this is a very “nebulous” description.

“It’s more of an interaction interface than it is a true conversation,” she said. “It’s not open text back and forth. This isn’t an open-ended conversation. It’s very structured and it mirrors really good clinical decision-making.”

Woebot checks in with the user every day to track their mood.

“If the user reports a negative mood, Woebot will offer an invitation to walk the user through an evidence-based technique based on CBT,” she said

If the user is feeling good or average, the app takes this as an opportunity to introduce some new exercises that may help the user progress further.

“We find it important to be very transparent about what the service is and what it isn’t. Woebot is not a crisis service. We have our users acknowledge that,” Darcy explained.

The app can detect “crisis language and basically offers the highest level of care that you can give in the constraints you’re dealing with”.

In these instances, it will provide the user with information on outside resources that have been compiled based on advice from suicide prevention experts.

Revenue model

Woebot, which does not disclose user numbers but has raised $8 million from backers including Andrew Ng’s AI Fund, is now on the path to expansion.

As part of its growth strategy, it has opened a small engineering office in Dublin that currently employs two people with three more to come soon.

The company, which has a team of 20 in total, plans to send out some senior engineers to Dublin in the summer to help with growing the office.

However the startup is still nailing down its revenue model. It is piloting the app with insurance companies that could use it with their health insurance customers.

Darcy is not opposed to introducing a subscription service for the app either.

“The revenue models that involve advertising or selling data, that’s just not going to be something that we’ll ever do,” she added.

The climate around data privacy has changed drastically of late, festooned by legislation like the General Data Protection Regulation (GDPR) and the myriad scandals that have circled Facebook.

Darcy said it’s good that people are more concerned now about their data and how it’s used.

“We’re coming from a clinical trials background. We tend to treat user data in the same way that we would treat data involved in a treatment trial.”

Darcy explained that its users remain anonymous and that email addresses collected during sign-up are siloed from the chat logs and cannot be linked but data can be deleted upon request.

This is one of the requirements stipulated by GDPR that came into force last year.

A US-based company must still comply with these rules if they have European users, which Darcy thinks is a good model regardless of jurisdiction.

“That is a higher standard than the one we’re held to in the US but we chose to go with that.”